I was referring to the "if keep=1" sample inequality based approach of having the same set of randomly split data. I hope its a bit more clear now, but let me know if you'd like to discuss it further or bring up any area where you want more clarity / specifics. In the advent of the big data craze, it would be great to have smoother/more scalable random split functionality.

I realize that this is feasible already in eviews 9, but it's not very intuitive and doesn't scale up well. Whereas for smaller data sets k-fold cross validation splitting is useful, because all observations 'take turns' being part of different partitions of the data.

Then the 20% portion of the data helps to reduce overfitting, you can compare the sum of squares and other metrics to assess model performance and implement some iterative algorithms to find the right coefficients based on how the model performs on the different partitions of the data.įor large data sets, a static. There are several reasons why random splitting is used, a few being to avoid overfitting, making sure the model generalizes or forecasts well across different data sets. For example, after the random split randomly partitions the data, the 80% portion of the original data set would be used for estimation as normal, then the remaining 20% is kept aside or sometimes split further. Although, it would make sense for the partition fraction to be user configurable, so we can split the data however we need. Random split is a way to randomly partition the data into groups. It probably goes by other names, I just know it by this particular convention. Keep up the great work, eviews has really served me well. With regards to ML, a more refined random split approach will make it much smoother for us all to follow the elementary machine learning block diagram: getting data, splitting data, getting a validation set, a test set then of course the quality metric, minimizing true error, ect. This probably isn't the only way to handle it, but maybe it can jostle our imaginations awhile. So there might have to be a slightly new feature for the equation's sample UI box.

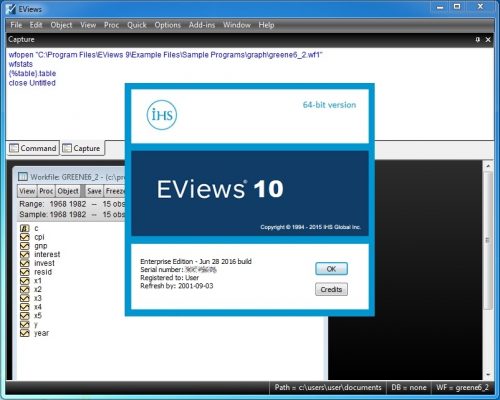

#EVIEWS 10 SERIES#

My humble idea is to have random splits somehow coupled with the equation object, so that no extra series objects are needed for a random split, but the equation will be able to read the random split argument. Not to mention k-fold is only available via a plug-in sub-routine and is not very user-friendly (although I appreciate the author's effort). If you are performing a high number of splits in the data, the workfile can become crowded with series objects and labeling the series with intuitive names becomes more difficult. 2 set aside split or k-fold CV, currently eviews needs to have the series in object forms to keep the randomly split data. I would love to have a more discrete way of doing a random split.